It’s healthy for student journalists to raise concerns about AI

Generative AI isn’t a real solution to the issues facing newsrooms. Students are right to point that out.

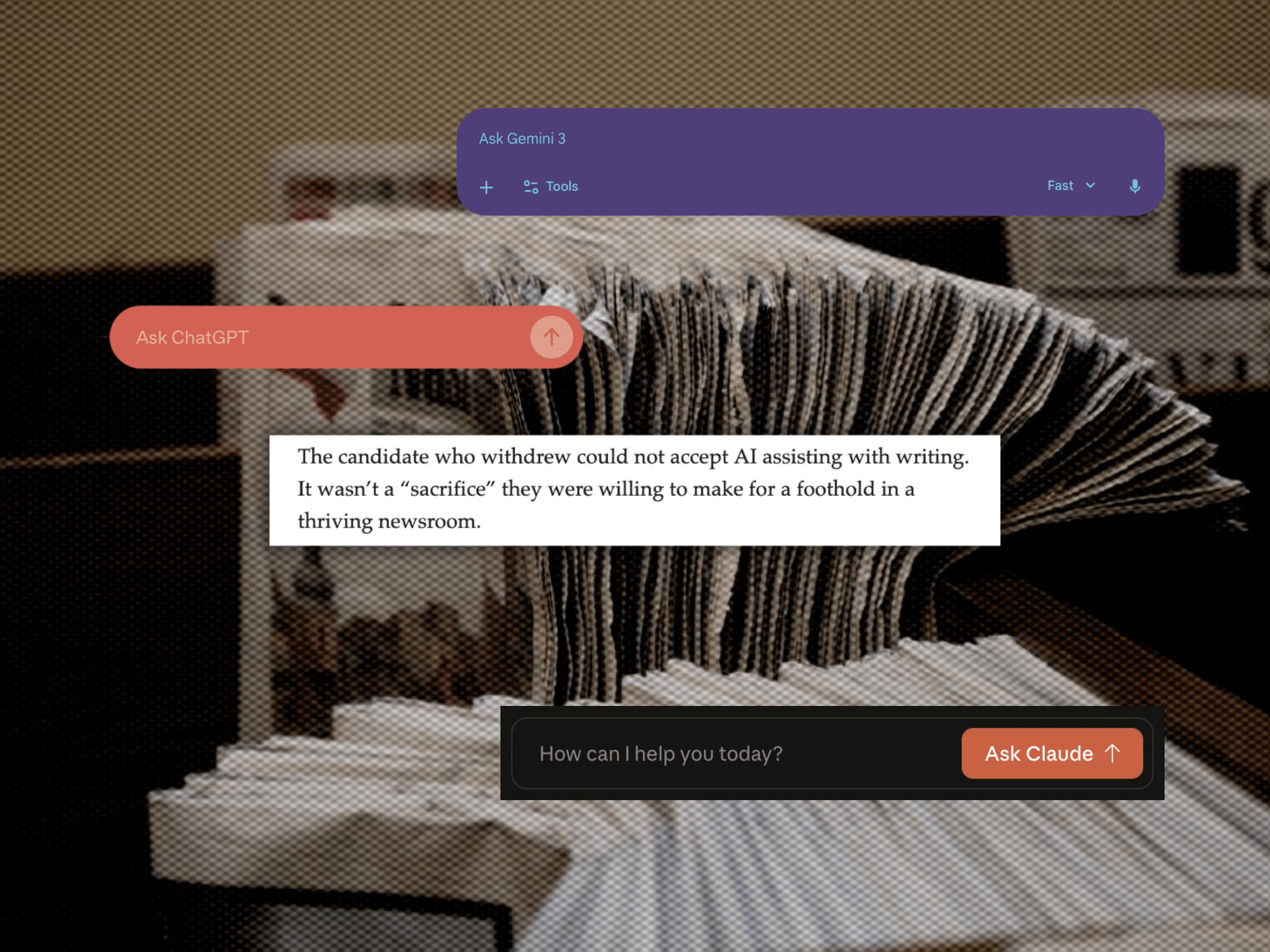

As an early-career journalist constantly reading through job listings, the reporting jobs I come across ask for knowledge of artificial intelligence (AI) tools more often than not — some going as far to recruit for positions with titles such as “AI-assisted reporter.” It makes me wonder whether I will reach a point where I have to choose between potentially landing a job or voicing criticisms of generative AI, the same dilemma faced by an applicant to Cleveland-based publication The Plain Dealer.

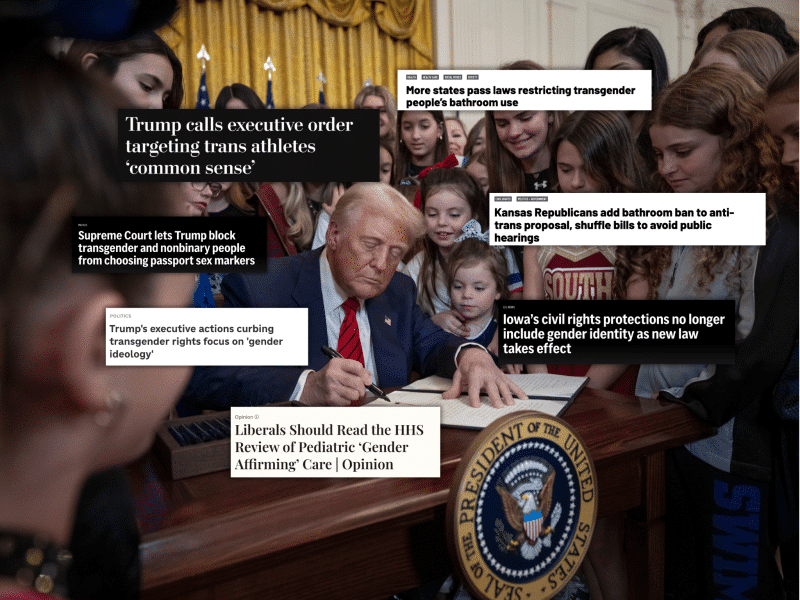

Chris Quinn, the publication’s editor, published a remarkably candid column admitting that a recent graduate withdrew their application over the publication’s practice of using AI to write articles. Quinn defended the use of generative AI to “free up” an extra weekly workday for reporters, and criticized journalism schools for fostering a negative attitude towards AI, reiterating his perspective in the Columbia Journalism Review.

Quinn is among the many journalists at all levels, early-career or veteran, who have been exploring if and how the technology should figure into our work. But as with any new development, there’s plenty to critique, and newsrooms seeking to build trust with audiences should be encouraging critical reflection on generative AI rather than putting down students hesitant to welcome it with open arms — especially when data shows readers aren’t sold on the technology’s promise.

From the classroom to the newsroom, using AI to “write” is becoming more prevalent

An October 2025 audit of AI-generated content in U.S. newspapers found that articles partially or fully written by AI became increasingly common after the launch of ChatGPT in 2022, especially in smaller local outlets.

A’lauren Gilchrist, the student representative on the National Association of Black Journalists’ board, said she’s worried about the effect the availability of AI is having on students.

“I see a lot of students are losing the motivation to source stories on their own, write stories of their own, produce authentic journalism, because they feel like ‘This tool can do it for me,’” she told The Objective.

She thinks when students see more experienced journalists using generative AI like ChatGPT for their work, it normalizes and makes it more acceptable to use the technology — especially if students feel their work is inadequate compared to their peers. As editor-in-chief of her student newspaper, Gilchrist recalled an instance where a writer submitted a draft generated entirely by ChatGPT. Following this incident, she said, the paper’s advisor had a long talk with the editorial board encouraging the students to put more effort into editing and fact-checking to ensure drafts are the writer’s original work.

“Honestly, if I were interviewing for a job that told me that ‘We use AI [here],’ I would look at them sideways, because in my personal opinion, I really feel that our art and what makes journalism journalism is that we’re sourcing on our own,” Gilchrist said.

Environmental journalist Alex Ip, founder of The Xylom and creator of a Bluesky starter pack called “Generative AI Slop-free News Outlets” said he’s observed Quinn’s views on AI commonly held throughout the industry, “predominantly by senior white male newsroom leaders, though it is not limited to [them],” he said.

“I think it is partly because of the fact that they are already overrepresented, and also because minority journalists, women journalists, journalists who come from immigrant backgrounds, they’re exercising more caution because they can see what the downside looks like.”

The case for criticism of AI

The Xylom, like the other publications in Ip’s starter pack, has an explicit policy against using generative AI. Ip said that because they often publish original reporting that does not yet exist on the internet, the policy enables them to protect the intellectual property of their reporters and photojournalists and ensure that sources are being accurately portrayed.

Some AI tools have already been commonly used by journalists even before the advent of models like ChatGPT. For example, Otter.ai has been used to generate interview transcripts, and machine learning programs have been a tool investigative reporters use to analyze and classify large data sets.

But the ethics of generative AI and quality of resulting content become more questionable when the technology is used to write, research, or edit articles. Condé Nast-owned Ars Technica recently retracted an article found to contain quotes fabricated by AI. Wired and Business Insider have also removed articles after finding out they were written by AI and featured unverifiable sources.

As an environmental journalist, Ip is particularly concerned about the growth of AI driving increased water and energy consumption from the data centers needed to make it work. Even a small data center can use up to 25.5 million liters of water annually for cooling, exacerbating water scarcity in neighboring communities.

Quinn’s column justifies the use of AI by claiming that it reduces their reporters’ workload, freeing up their time to chase stories and meet up with sources. However, according to a Harvard Business Review study, having access to AI tools often lead people to take on more work and widen their job scope, developing an unsustainable workload that eventually leads to burnout.

In worst-case scenarios, employers may use increased productivity associated with AI as a pretext to lay off employees, or in the case of Florida-based Suncoast Searchlight, fire a journalist from her job after raising concerns about using the technology. The articles edited by ChatGPT contained factual inaccuracies and fabricated quotes misrepresenting the writer’s skills.

As Ip pointed out, the first people on the chopping block are “pretty much predominantly women and people of color.”

AI policy has been a focal point of labor disputes in major newsrooms, including Politico, ProPublica, Los Angeles Times, and Law360.

In 2024, the union representing Politico journalists negotiated one of the first contracts in the industry to include provisions on the use of AI. Yet the following year, executives violated the contract, and the union once again took their employers to court. At present, the ProPublica Guild is currently preparing to strike for a fair contract including guardrails on the implementation of AI.

What does this mean for students?

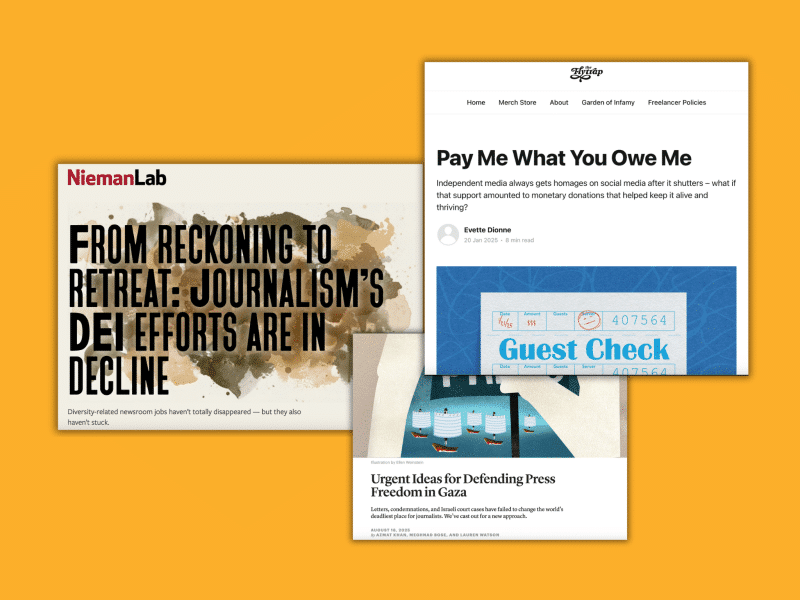

Quinn was right about one thing in his column: Students are entering the worst journalism job market in years. However, instead of asking students to abandon their morals for a chance at employment, we should be looking at the systemic factors that have made the job market the way it is.

A billionaire owner with no journalism credentials can decide to cut entire sections of a paper and lay off nearly one-half of its workforce while local newsrooms, such as The Plain Dealer, no longer have large teams to cover their counties. The current U.S. presidential administration has made it a priority to attack journalists, particularly those marginalized, and journalism in a media landscape awash with mis/disinformation. Newsrooms have been incentivized to invest in artificial intelligence, despite the fact that numerous studies have shown readers trust humans over AI-generated work.

While the struggles that newsrooms face may indeed be dire, error-riddled AI-slop is not the way forward while newsrooms must work to gain and maintain the trust of their communities. Although they may be at the beginning of their careers, journalism students can bring important perspectives to newsrooms they work with, and shouldn’t be disparaged for trying to improve the industry for both journalists and those whose stories they’re trusted with.

“I’m just hoping that people realise, overall, that AI can’t replace our authentic voices,” said Gilchrist. “If we really stand on that, I think we may see a revival in traditional journalism.”

Emma Bainbridge is a freelance journalist covering climate, policing, and stories of resistance to fascism. FInd her on Instagram @emmakbainbridge or Bluesky at eksbainbridge.bsky.social.

This piece was edited by James Salanga. Copy edits by Marlee Baldridge.

We depend on your donation. Yes, you...

With your small-dollar donation, we pay our writers, our fact checkers, our insurance broker, our web host, and a ton of other services we need to keep the lights on.

But we need your help. We can’t pay our writers what we believe their stories should be worth and we can’t afford to pay ourselves a full-time salary. Not because we don’t want to, but because we still need a lot more support to turn The Objective into a sustainable newsroom.

We don’t want to rely on advertising to make our stories happen — we want our work to be driven by readers like you validating the stories we publish are worth the effort we spend on them.

Consider supporting our work with a tax-deductable donation.

James Salanga,

Editorial Director